【Pytorch学习笔记】5.卷积神经网络

文章目录

- 32.卷积神经网络

- 33.池化层&上/下采样

- 34.批量正则化

- 35.经典卷积网络

- 36.残差网络

- 37.nn.Module

- 38.数据增强

- 39.实战

根据龙良曲Pytorch学习视频整理,视频链接:

【计算机-AI】PyTorch学这个就够了!

(好课推荐)深度学习与PyTorch入门实战——主讲人龙良曲

32.卷积神经网络

基础知识还是得看ng

import torchimport torch.nn as nnimport torch.nn.functional as F# 卷积层x = torch.rand(1, 1, 28, 28)layer = nn.Conv2d(1, 3, kernel_size=3, stride=1, padding=0)out = layer.forward(x)print(out.size()) # torch.Size([1, 3, 26, 26])layer = nn.Conv2d(1, 3, kernel_size=3, stride=1, padding=1)out = layer.forward(x)print(out.size()) # torch.Size([1, 3, 28, 28])layer = nn.Conv2d(1, 3, kernel_size=3, stride=2, padding=1)out = layer.forward(x)print(out.size()) # torch.Size([1, 3, 14, 14])out = layer(x) # __call__ 推荐使用,不推荐使用forwardprint(out.size()) # torch.Size([1, 3, 14, 14])# print(layer.weight)print(layer.weight.shape) # torch.Size([3, 1, 3, 3])print(layer.bias.shape) # torch.Size([3])w = torch.rand(16, 3, 5, 5)b = torch.rand(16)x = torch.randn(1, 3, 28, 28)out = F.conv2d(x, w, b, stride=2, padding=2)print(out.shape) # torch.Size([1, 16, 14, 14])

33.池化层&上/下采样

Pooling:feature map变小,与隔行采样(Down sample)不同

- Max pooling

Avg pooling

池化层

x = out

print(x.shape) # torch.Size([1, 16, 14, 14])layer = nn.MaxPool2d(2, stride=2)

out = layer(x)

print(out.shape) # torch.Size([1, 16, 7, 7])out = F.avg_pool2d(x, 2, stride=2)

print(out.shape) # torch.Size([1, 16, 7, 7])

Up sample

# 上采样x = outout = F.interpolate(x, scale_factor=2, mode='nearest')print(out.shape) # torch.Size([1, 16, 14, 14])

ReLU

# ReLUprint(x.shape) # torch.Size([1, 16, 7, 7])layer = nn.ReLU(inplace=True)out = layer(x)print(out.shape) # torch.Size([1, 16, 7, 7])out = F.relu(x)print(out.shape) # torch.Size([1, 16, 7, 7])

34.批量正则化

1d

import torchimport torch.nn as nnx = torch.randn(100, 16, 784)layer = nn.BatchNorm1d(16, momentum=0.1, affine=True) # affine自动更新beta、gama# layer.eval() # test时加上out = layer(x)print(layer.running_mean)print(layer.running_var)for i in range(100):out = layer(x)print(layer.running_mean)print(layer.running_var)""" tensor([-3.3207e-04, -2.9735e-04, 8.1316e-04, 9.3234e-05, 1.9049e-04, 6.9931e-04, 2.9378e-04, 3.1153e-05, 3.4325e-04, 3.2283e-04, -2.0425e-04, -4.0346e-04, 1.7246e-04, -1.9482e-04, -1.2086e-04, -8.4132e-04]) tensor([0.9999, 0.9999, 1.0002, 1.0006, 0.9997, 1.0003, 1.0000, 1.0002, 1.0003, 1.0000, 0.9998, 1.0000, 1.0005, 0.9996, 0.9997, 0.9999]) tensor([-0.0033, -0.0030, 0.0081, 0.0009, 0.0019, 0.0070, 0.0029, 0.0003, 0.0034, 0.0032, -0.0020, -0.0040, 0.0017, -0.0019, -0.0012, -0.0084]) tensor([0.9986, 0.9987, 1.0023, 1.0065, 0.9969, 1.0033, 0.9997, 1.0022, 1.0029, 1.0004, 0.9981, 0.9999, 1.0053, 0.9957, 0.9972, 0.9985]) """

layer.running_mean和layer.running_var得到的是全局的均值和方差,不是当前Batch上的,第一次只跑了一个Batch,现在还没有办法直接查看某个Batch上的这两个统计量的值。第一次只进行一次前向传播,在前向传播中来更新均值和方差的值: μ ′ = ( 1 − m ) μ + m μ t = ( 1 − 0.1 ) × 0 + 0.1 × 0.5 = 0.05 \mu ‘= (1-m) \mu + m \mu _t= ( 1 − 0.1 ) × 0 + 0.1 × 0.5 = 0.05 μ′=(1−m)μ+mμt=(1−0.1)×0+0.1×0.5=0.05

默认动量m = 0.1, μ \mu μ是更新前的均值(初始值为0), μ t \mu_t μt是当前batch的平均值。进行多次前向传播,均值和方差就会趋于数据真实分布注:test时要加上

layer.eval(),test不进行反向传播,即 β \beta β和 γ \gamma γ不更新

2d

# 接卷积神经网络实例print(x.shape) # torch.Size([1, 16, 7, 7])layer = nn.BatchNorm2d(16)out = layer(x)print(out.shape) # torch.Size([1, 16, 7, 7])print(layer.weight.shape) # torch.Size([16])print(layer.bias.shape) # torch.Size([16])print(vars(layer)) # layer的参数

Advantages

- Converge faster

- Better performance

- Robust

stable

larger learning rate

35.经典卷积网络

ImageNet: LeNet-5 → \rightarrow → AlexNet → \rightarrow → VGG → \rightarrow → GoogLeNet → \rightarrow → ResNet → \rightarrow → Inception

36.残差网络

import torchimport torch.nn as nnimport torch.nn.functional as Fclass ResBlk(nn.Module):def __init__(self, ch_in, ch_out):self.conv1 = nn.Conv2d(ch_in, ch_out, kernel_size=3, stride=1, padding=1)self.bn1 = nn.BatchNorm2d(ch_out)self.conv2 = nn.Conv2d(ch_in, ch_out, kernel_size=3, stride=1, padding=1)self.bn2 = nn.BatchNorm2d(ch_out)self.extra = nn.Sequential()if ch_out != ch_in:# [b, ch_in, h, w] => [b, ch_out, h, w]self.extra = nn.Sequential(nn.Conv2d(ch_in, ch_out, kernel_size=1, stride=1),nn.BatchNorm2d(ch_out))def forward(self, x):out = F.relu(self.bn1(self.conv1(x)))out = self.bn2(self.conv2(out))out = self.extra(x) + outreturn out

37.nn.Module

- Every Layer is nn.Module

- nn.Module nested in nn.Module

- embed current layers

Linear ReLU Sigmoid Conv2d ConvTransposed2d Dropout etc. - Container

net(x) - parameters

- modules

modules: all nodes

children: direct childrend - to(device)

- save and load

net.load_state_dict(torch.load('ckpt.mdl'))torch.save(net.state_dict(), 'ckpt.mdl') - train / test

net.train()net.eval() implement own layer

class Flatten(nn.Module):

def __init__(self):super(Flatten, self).__init__()def forward(self, input):return input.view(input.size(0), -1)

class TestNet(nn.Module):

def __init__(self):super(TestNet, self).__init__()self.net = nn.Sequential(nn.Conv2d(1, 16, stride=1, padding=1),nn.MaxPool2d(2, 2),Flatten(),nn.Linear(1*14*14, 10))def forward(self, x):return self.net(x)

38.数据增强

Limited Data

- Small network capacity

- Regularization

- Data argumentation(will help but not much)

Data argumentation

- Flip

transforms.RandomHorizontalFlip()随机水平翻转transforms.RandomVertialFlip()随机垂直翻转 - Rotate

transforms.RandomRotation(15)随机旋转,不超过15度transforms.RandomRotation([90, 180, 270])随机在指定角度中旋转 - Scale

transforms.Resize([32, 32]) - Random Move & Crop

transforms.RandomCrop([28, 28]) - Noise

- GAN

39.实战

main.py

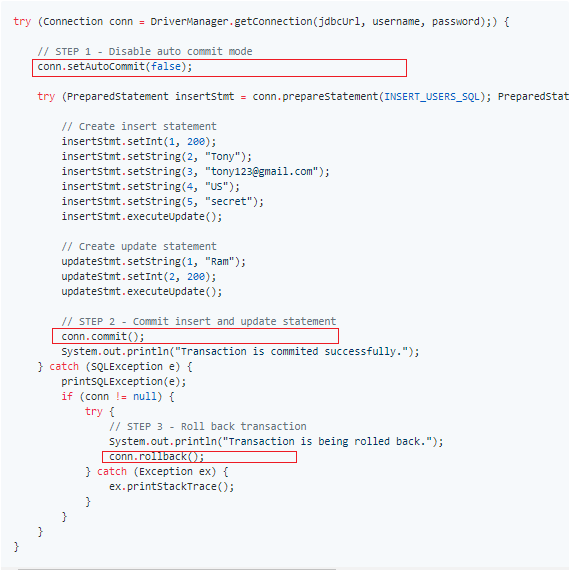

import torchimport torch.nn as nnimport torch.optim as optimfrom torch.utils.data import DataLoaderfrom torchvision import transforms, datasetsfrom Pytorch21_7_29.conv.lenet5 import Lenet5from Pytorch21_7_29.conv.resnet import ResNet18def main():batch_size = 32cifar_train = datasets.CIFAR10('cifar', train=True, transform=transforms.Compose([transforms.Resize([32, 32]),transforms.ToTensor()]), download=True)cifar_train = DataLoader(cifar_train, batch_size=batch_size, shuffle=True)cifar_test = datasets.CIFAR10('cifar', train=False, transform=transforms.Compose([transforms.Resize([32, 32]),transforms.ToTensor(),transforms.Normalize(mean=[0.485, 0.456, 0.406],std=[0.229, 0.224, 0.225])]), download=True)cifar_test = DataLoader(cifar_test, batch_size=batch_size, shuffle=True)x, label = iter(cifar_train).next()print("x:", x.shape, 'label:', label.shape) # x: torch.Size([32, 3, 32, 32]) label: torch.Size([32])device = torch.device('cuda')# model = Lenet5().to(device)model = ResNet18().to(device)criteon = nn.CrossEntropyLoss()optimizer = optim.Adam(model.parameters(), lr=1e-3)print(model)for epoch in range(1000):model.train()for batch_idx, (x, label) in enumerate(cifar_train):x, label = x.to(device), label.to(device)logits = model(x)# logits: [b, 10] label: [b]loss = criteon(logits, label) # tensor scalar# backwardoptimizer.zero_grad()loss.backward()optimizer.step()#print(epoch, loss.item())# 测试不需要构建计算图model.eval()with torch.no_grad():# testtotal_correct = 0total_num = 0for x, label in cifar_test:x, label = x.to(device), label.to(device)logits = model(x)pred = logits.argmax(dim=1)total_correct += torch.eq(pred, label).float().sum().item()total_num += x.size(0)acc = total_correct / total_numprint(epoch, acc)if __name__ == '__main__':main()""" Lenet5( (conv_unit): Sequential( (0): Conv2d(3, 6, kernel_size=(5, 5), stride=(1, 1)) (1): AvgPool2d(kernel_size=2, stride=2, padding=0) (2): Conv2d(6, 16, kernel_size=(5, 5), stride=(1, 1)) (3): AvgPool2d(kernel_size=2, stride=2, padding=0) ) (fc_unit): Sequential( (0): Linear(in_features=400, out_features=120, bias=True) (1): ReLU() (2): Linear(in_features=120, out_features=84, bias=True) (3): ReLU() (4): Linear(in_features=84, out_features=10, bias=True) ) (criteon): CrossEntropyLoss() ) ResNet18( (conv1): Sequential( (0): Conv2d(3, 64, kernel_size=(3, 3), stride=(3, 3)) (1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) ) (blk1): ResBlk( (conv1): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1)) (bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1)) (bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (extra): Sequential( (0): Conv2d(64, 128, kernel_size=(1, 1), stride=(2, 2)) (1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) ) ) (blk2): ResBlk( (conv1): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1)) (bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1)) (bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (extra): Sequential( (0): Conv2d(128, 256, kernel_size=(1, 1), stride=(2, 2)) (1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) ) ) (blk3): ResBlk( (conv1): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1)) (bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1)) (bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (extra): Sequential( (0): Conv2d(256, 512, kernel_size=(1, 1), stride=(2, 2)) (1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) ) ) (blk4): ResBlk( (conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1)) (bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1)) (bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (extra): Sequential() ) (outlayer): Linear(in_features=512, out_features=10, bias=True) ) """

lenet5.py

import torchimport torch.nn as nnimport torch.nn.functional as Fclass Lenet5(nn.Module):""" for cifar10 dataset. """def __init__(self):super(Lenet5, self).__init__()self.conv_unit = nn.Sequential(# x:[b, 3, 32, 32] => [b, 6, 28, 28]nn.Conv2d(3, 6, kernel_size=5, stride=1, padding=0),nn.AvgPool2d(kernel_size=2, stride=2, padding=0),# x:[b, 6, 14, 14] => [b, 16, 10, 10]nn.Conv2d(6, 16, kernel_size=5, stride=1, padding=0),nn.AvgPool2d(kernel_size=2, stride=2, padding=0),# x:[b, 16, 5, 5] => [b, 400])# flatten# fc unitself.fc_unit = nn.Sequential(nn.Linear(16*5*5, 120),nn.ReLU(),nn.Linear(120, 84),nn.ReLU(),nn.Linear(84, 10))# self.criteon = nn.MSELoss() # logistics问题使用self.criteon = nn.CrossEntropyLoss() # 一般分类问题使用def forward(self, x):""" :param x: [b, 3, 32, 32] :return: """batch_size = x.size(0)x = self.conv_unit(x)x = x.view(batch_size, -1) # -1指16*5*5logits = self.fc_unit(x)# pred = F.softmax(logits, dim=1) 交叉熵函数内包含了softmax# loss = self.criteon(logits, y)return logitsdef main():net = Lenet5()tmp = torch.randn(2, 3, 32, 32)out = net(tmp)print('lenet_out:', out.shape) # lenet_out: torch.Size([2, 10])if __name__ == '__main__':main()

resnet.py

import torchimport torch.nn as nnimport torch.nn.functional as Fclass ResBlk(nn.Module):""" resnet block """def __init__(self, ch_in, ch_out, stride=1):""" :param ch_in: :param ch_out: """super(ResBlk, self).__init__()# we add stride support for resblk, which is distinct from tutorials.self.conv1 = nn.Conv2d(ch_in, ch_out, kernel_size=3, stride=stride, padding=1)self.bn1 = nn.BatchNorm2d(ch_out)self.conv2 = nn.Conv2d(ch_out, ch_out, kernel_size=3, stride=1, padding=1)self.bn2 = nn.BatchNorm2d(ch_out)self.extra = nn.Sequential()if ch_out != ch_in:# [b, ch_in, h, w] => [b, ch_out, h, w]self.extra = nn.Sequential(nn.Conv2d(ch_in, ch_out, kernel_size=1, stride=stride),nn.BatchNorm2d(ch_out))def forward(self, x):""" :param x: [b, ch, h, w] :return: """out = F.relu(self.bn1(self.conv1(x)))out = self.bn2(self.conv2(out))# short cutout = self.extra(x) + outout = F.relu(out)return outclass ResNet18(nn.Module):def __init__(self):super(ResNet18, self).__init__()self.conv1 = nn.Sequential(nn.Conv2d(3, 64, kernel_size=3, stride=3, padding=0),nn.BatchNorm2d(64))# followed 4 blocks# [b, 64, h, w] => [b, 128, h, w]self.blk1 = ResBlk(64, 128, stride=2)# [b, 128, h, w] => [b, 256, h, w]self.blk2 = ResBlk(128, 256, stride=2)# [b, 256, h, w] => [b, 512, h, w]self.blk3 = ResBlk(256, 512, stride=2)# [b, 512, h, w] => [b, 1024, h, w]self.blk4 = ResBlk(512, 512, stride=2)self.outlayer = nn.Linear(512*1*1, 10)def forward(self, x):""" :param x: :return: """x = F.relu(self.conv1(x))x = self.blk1(x)x = self.blk2(x)x = self.blk3(x)x = self.blk4(x)# print("after_conv:", x.shape) # torch.Size([32, 512, 2, 2])# [b, 512, h, w] => [b, 512, 1, 1]x = F.adaptive_avg_pool2d(x, [1, 1])# print("after_pool:", x.shape) # torch.Size([32, 512, 1, 1])x = x.view(x.size(0), -1)x = self.outlayer(x)return xdef main():blk = ResBlk(64, 128, stride=2)tmp = torch.randn(2, 64, 32, 32)out = blk(tmp)print("block:", out.shape) # torch.Size([2, 128, 16, 16])x = torch.randn(2, 3, 32, 32)model = ResNet18()out = model(x)print("resnet:", out.shape) # torch.Size([2, 10])if __name__ == '__main__':main()

还没有评论,来说两句吧...